What is prompt-tuning?

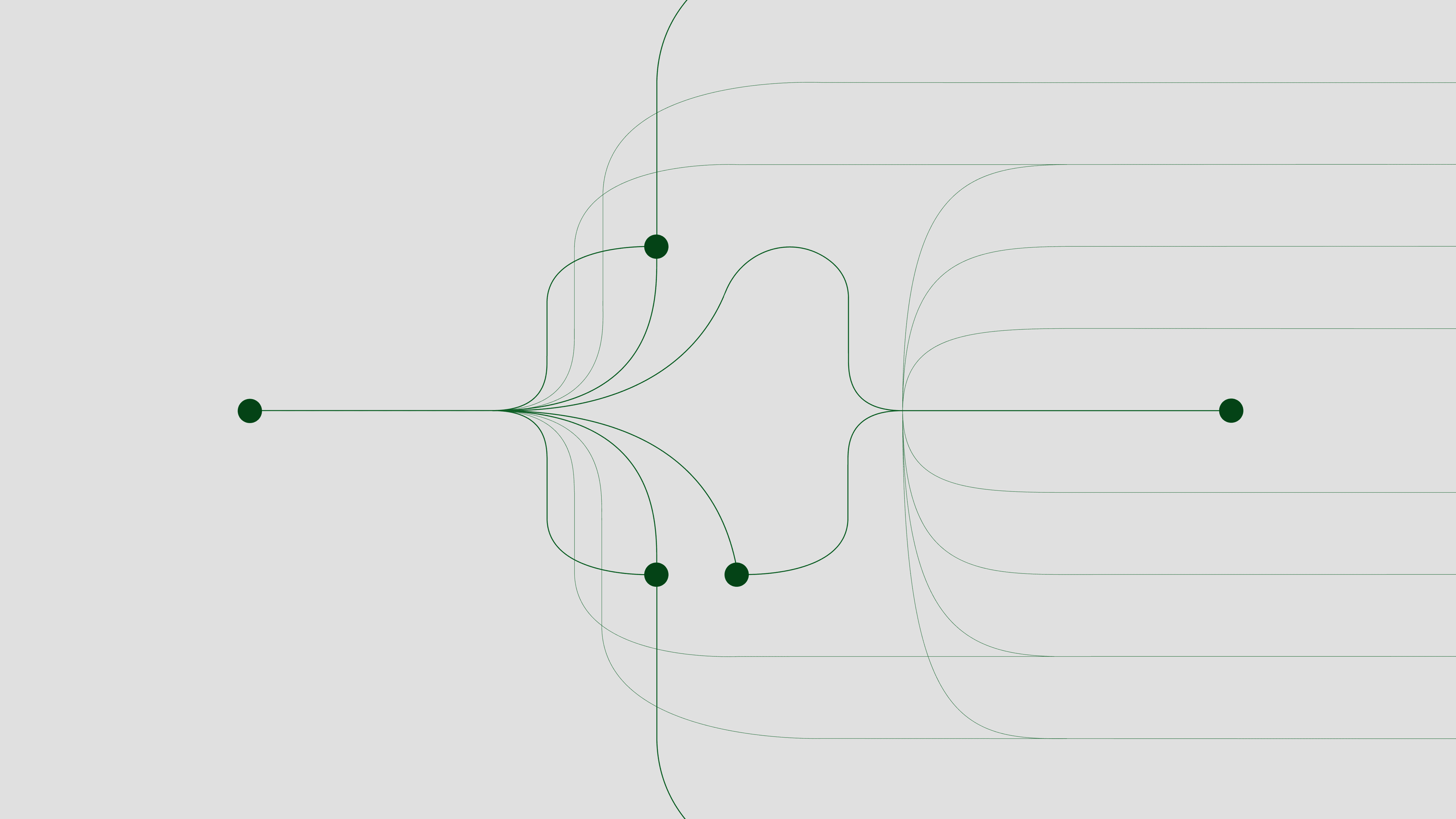

Prompt-tuning is an efficient, low-cost way of adapting an AI foundation model to new downstream tasks without retraining the model and updating its weights.

Prompt-tuning is an efficient, low-cost way of adapting an AI foundation model to new downstream tasks without retraining the model and updating its weights.

Foundation models are set to usher in the next wave of AI enterprise applications. These large, reusable models have been pretrained on the vast knowledge of the internet, making them easier to customize for things like analyzing legal contracts or detecting fraud in financial documents.

Until recently, fine-tuning was the best way to redeploy one of these pretrained models for specialized tasks. You gathered and labeled examples of the target task and fine-tuned your model rather than train an entirely new one from scratch. But a simpler, more energy-efficient technique has emerged as foundation models grow relentlessly larger: prompt-tuning.

In prompt-tuning, the best cues, or front-end prompts, are fed to your AI model to give it task-specific context. The prompts can be extra words introduced by a human, or AI-generated numbers introduced into the model's embedding layer. Like crossword puzzle clues, both prompt types guide the model toward a desired decision or prediction. Prompt-tuning allows a company with limited data to tailor a massive model to a narrow task. It also eliminates the need to update the model’s billions (or trillions) of weights, or parameters.

Redeploying an AI model without retraining it can cut computing and energy use by at least 1,000 times, saving thousands of dollars, said IBM’s David Cox, head of Exploratory AI Research and co-director of the MIT-IBM Watson AI Lab. “With prompt-tuning, you can rapidly spin up a powerful model for your particular needs,” he said. “It also lets you move faster and experiment.”

Prompt-tuning originated with large language models but has since expanded to other foundation models, like transformers that handle other sequential data types, including audio and video. Prompts may be snippets of text, streams of speech, or blocks of pixels in a still image or video.

“It’s a fast and sustainable way of extracting knowledge from these large models,” said IBM’s Ramewsar Panda, an expert on prompt-tuning at the MIT-IBM lab. “We don’t touch the model. It’s frozen.”

Initially, prompts were designed by hand, through what's known as prompt-engineering. Let’s say you want to adapt a language model for translation tasks. You give the model a description of the target task or a few examples. “Translate English to French,” for example, with the prompt: “cheese.” The model then outputs its prediction: “fromage.” This manual prompt primes the model to retrieve from its memory banks other words in French. If the task is difficult enough, dozens of prompts might be needed.

Prompt-engineering emerged with the release of OpenAI’s GPT (Generative Pretrained Transformer), a behemoth 10 times larger than any previous language model. In a 2020 paper, researchers at OpenAI showed that the scale of GPT’s successor, GPT-3, at 175 billion parameters, allowed it to perform specialized tasks with only a smattering of words introduced at inference time. With no retraining, GPT-3 performed nearly as well as a model fine-tuned on labeled data.

Hand-crafted prompts were quickly replaced by superior AI-designed prompts consisting of strings of numbers. In a paper the following year, Google researchers introduced so-called “soft” prompts designed by an AI that outperformed human-engineered “hard” prompts.

Around the same time, Stanford researchers introduced prefix-tuning, another automated prompt-design method that allows the model to learn one task after another. Prefix-tuning combines soft prompts with prompts injected into layers of the deep learning model for added flexibility. Though prompt-tuning is more efficient, both techniques let you freeze the model and skip expensive retraining.

Unlike hard prompts, AI-designed soft prompts are unrecognizable to the human eye. Each prompt consists of an embedding, or string of numbers, that distills knowledge from the larger model. High level or task specific, the prompt acts as a substitute for additional training data. Researchers recently estimated that a good language classifier prompt is worth hundreds to thousands of extra data points.

One drawback of prompt-tuning is its lack of interpretability. The AI discovers prompts optimized for a given task but can’t explain why it chose those embeddings. Like deep learning models themselves, soft prompts are opaque.

“You’re learning the prompts but there’s very little visibility into how the model is helping,” said Panda. “It’s still a mystery.”

Foundation models are finding new enterprise applications, from drug and materials discovery to unpacking technical documentation like car manuals. Prompt-tuning is evolving with them.

One area is multi-task learning. Foundation models often need to pivot quickly, from answering customer questions to identifying negative comments in online reviews. Rather than design a unique prompt for each task, researchers are discovering ways to create universal prompts that can be easily recycled.

“Think of it as applying multi-task transfer learning to prompts,” said Panda. “You learn a single prompt that consolidates task-shared knowledge so you can quickly adapt the model.”

In an upcoming paper at the International Conference on Learning Representations (ICLR), Panda and his colleagues show that their Multi-task Prompt Tuning (MPT) method outperformed other methods, and even did better than models fine-tuned on task-specific data. Instead of spending thousands of dollars to retrain a 2-billion parameter model for specialized task, MPT lets you customize the model for less than $100, said Panda.

Another up-and-coming area of research involves finding prompts on the fly as an AI model continually learns new tasks and concepts. Acquiring new knowledge involves updating the model on new data, but sometimes old knowledge gets overwritten in what’s known as catastrophic forgetting.

In a pre-print paper on arXiv, IBM researchers show that a technique called CODA-Prompt can discover prompts for consecutive, never-seen-before tasks, like classifying drawings, followed by paintings and photos without the model forgetting what it originally learned.

This type of flexible prompt for continual learning allows you to fix mistakes as they arise, without retaining the data and running afoul of privacy laws. “Mistakes might be observed in a chat session from user data,” said Leonid Karlinsky, an IBM researcher at the MIT-IBM Lab who co-developed the technique. “CODA-Prompt lets you correct the mistakes without holding on to that personal data.”

Finally, prompt-tuning also shows promise as a quick and low-cost tool to mitigate algorithmic bias. Because AI models are trained on real-world data, they inevitably absorb society’s biases, which can lead to decisions that perpetuate and exacerbate inequities in everything from healthcare to hiring. IBM researchers recently presented a pair of papers at the 2022 NeurIPS conference aimed at counteracting race and gender bias in large language and vision models using AI-designed prompts.

One of the researchers’ methods, called FairIJ, identifies the most biased data points in the model’s training set and has the model set them aside via prompts appended to the model’s original prompts. Tested on a salary-prediction task, a model tuned with FairIJ achieved more accurate, less biased results than several top bias-mitigation methods, the researchers found.

Another method, FairReprogram, gives an AI trained on beauty magazines the equivalent of gender-sensitivity training via prompts also attached to the original prompts. To reorient a classifier that incorrectly learned to associate only women with blonde hair as “women,” IBM researchers added an AI-designed border of black pixels to a photo of a woman with brown hair. The pixels, they found, were able to trick the model into expanding its notion of women to include those with brown hair.

Prompt-tuning not only shrinks the cost of tailoring large models to new applications, said IBM's Cox, it can correct the model's behavior — in this case, mitigating bias.

“Prompt-tuning allows you to have your cake and eat it, too,” he said. “You can adapt your model to specialized tasks faster and more sustainably, while making it easier to find and fix problems.”