IBM’s new AI outperforms competition in table entry search with question-answering

Making sense of organizational data is crucial for any company. But it’s not always easy. Be it financial reports or employees’ personal information, data is often hidden in tables in not easily searchable PDFs, making us waste time.

We want to help—with artificial intelligence.

Our team at IBM Research has developed an AI model that allows users to ask questions in natural language to find the information tucked away in tables, faster and more accurately than ever before. In a recent paper1 presented at NAACL 2021, we describe our machine-learning system dubbed Row and Column Intersection (RCI) that consistently outperforms our competitors across several academic benchmarks. The corresponding model and code have been released on GitHub.

Without using any specialized pre-trained models, we were able to achieve 98 percent accuracy in finding cell values in tables for lookup questions—those with an answer in a cell, and 89.8 percent accuracy on all types of questions from the industry-leading WikiSQL benchmark. The model also outperforms current cutting-edge approaches, like Google’s TAPAS and Facebook’s TaBERT.

Our AI could be used to locate tables over large corpora and identify cell values in tables in different domains, from finance to health and more. Companies using spreadsheets can make use of this technology to access relevant information with speed and efficiency.

What’s in that cell?

For years now, insights engines such as Watson Discovery have enabled business analytics and question answering on complex documents such as PDFs with a mix of text, tables, charts and other semi-structured information. Recent progress in machine reading comprehension has greatly improved the accuracy of question answering, making it a boon for production systems. The most notable innovation has been the application of transformer-based language models such as BERT that quickly and accurately identify precise answers in text passages.

But tabular data requires different treatment.

Tables are semi-structured objects: they are usually organized to represent information about a specific type of an entity in rows (say, a drug) and the entity’s attributes (possible side effects, manufacturer, price, etc.) in columns. The header row contains hints to understand those underlying relations. Uncovering the table structure is crucial to understand the information within—and that’s tricky for machine reading comprehension.

We’ve been trying to improve table data extraction for a while. Our Table QA solution follows our previously developed technology, Smart Document Understanding (SDU). Launched in 2019, it was recently integrated into Watson Discovery and can efficiently extract tables from complex documents. Our clients love this tech because it uncovers a wealth of information otherwise inaccessible in enterprise search engines.

Still, SDU struggles with giving the user easily understandable results. Once the table is extracted, the current solutions integrated in Watson Discovery perform an information retrieval approach to rank tables as an output of search queries. The user is then expected to skim through the tables and locate the relevant cell values—a rather tedious and time-consuming task.

That’s where the new Row and Column Intersection makes a difference—by rapidly and accurately highlighting the cell with the answer.

To achieve this, the model builds upon machine reading comprehension (MRC) technology, typically used by search engines to answer questions about passages of text. We’ve extended it, making it also understand tables and their structure—both the text in a user’s question and the data in the extracted tables.

For instance, if you were to input a series of financial reports for a company X as PDFs into Watson Discovery Service, you could ask the system: “What is the income of X per common share in 2011?” The AI would return a list of tables, leaving to the user to inspect the content of the tables.

Getting to that answer… faster

To do this more efficiently, researchers have been looking into transformer-based architectures—machine learning models—such as Facebook’s TaBERT and Google’s TAPAS. Properly trained transformers should be capable of encoding the tabular structure, taking into account the context of cells to provide meaningful representations—and at the same time fine-tune the question-answering task.

This is exactly what our transformer does.

RCI relies on neural language models such as ALBERT that have been pre-trained on web-scale corpora and fine-tuned on tasks answering questions on tables. It estimates the relevance probability of a question for each row and column in a table. The probabilities are either used to answer questions directly or highlight the relevant regions of tables as a heatmap, helping users to easily locate the answers in tables.

Say the system is given tables about historic video games and the user asks: “What is the name of the game developed by Interplay that can be played on IBM PC?”

The system would first scan the content of the table left to right to identify the column that might contain the answer and select the column “title.” Then it would scan the rows. Finally, the AI would return the answer “The Lord of the Rings Volume 2” located at the intersection of both selections, as illustrated in this animation:

Watch this video on YouTube - Question Answering Over Tables Using Row-Column Interaction Model.

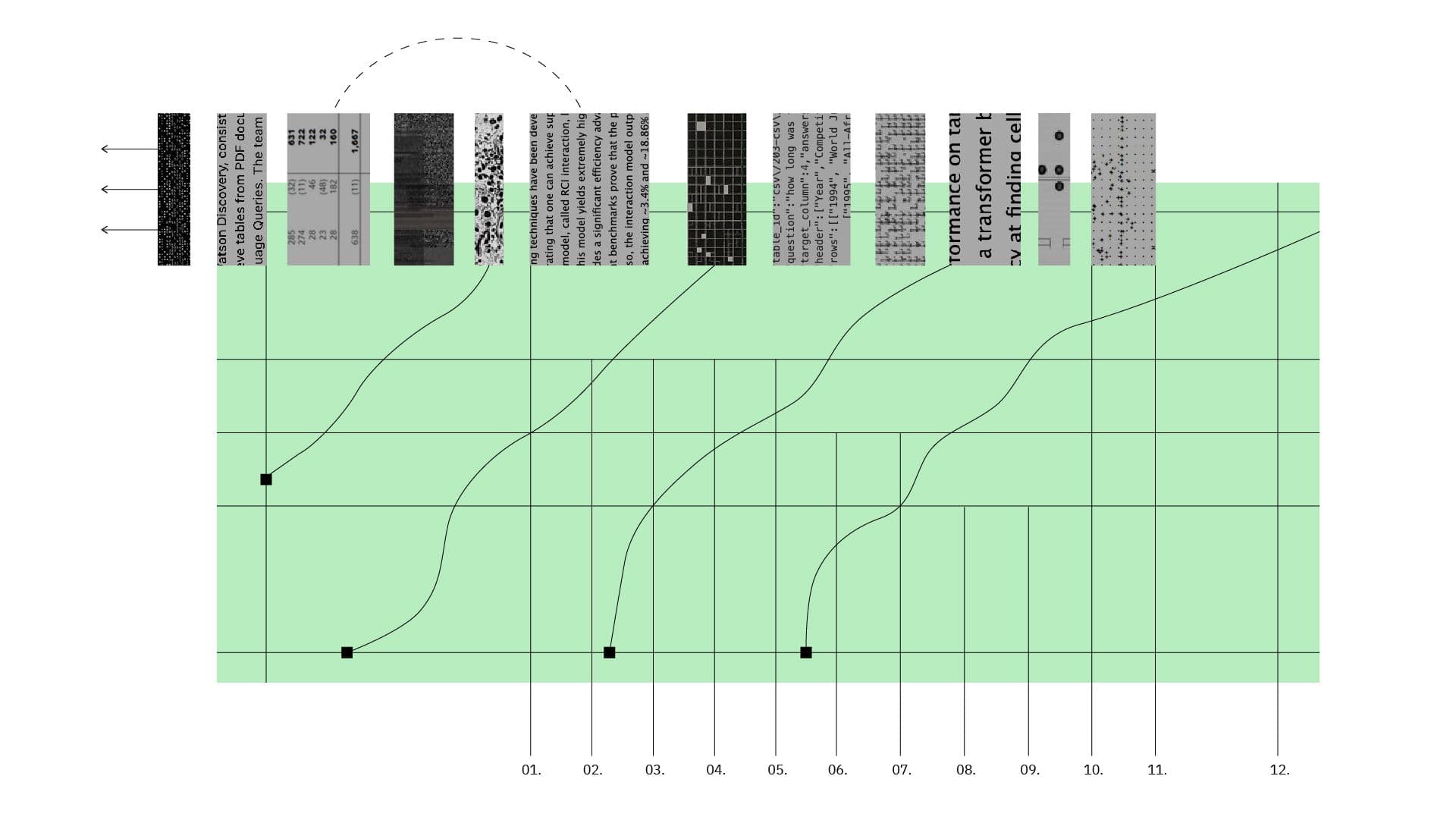

Each row and column in the table are represented by vectors of numbers related to the embedded meaning. The RCI representation model (shown in Figure 1) provides a significant efficiency advantage by pre-computing embeddings for existing tables and storing them in the nearest index. This way, the pre-processing of tables into embeddings is done only once at indexing time, making real time retrieval and QA extremely fast.

Our team is now focusing on applying the RCI technology to Watson Discovery’s question-answering capability, which currently can only provide answers about passages of text. The challenge is to make our technology robust enough to work on noisy data in tables and efficient across various topics.

We are also exploring the use Retrieval Augmented Generation and Dense Passage Retrieval to approach the end-to-end table QA task. In this case where dense passage retrieval is used to find the relevant sections within multiple tables and a sequence-to-sequence model, such as BART, reads the retrieved content and generates an answer using implicit reasoning rather than matching it in the table itself.

This is a new, exciting frontier of AI, a scenario in which text and structured data are both used by AI system to answer questions and provide insights to business analytics. Our aim is to push the boundaries of this field further than ever before.

References

-

Glass, M., Canim, M., Gliozzo, A., et al. Capturing Row and Column Semantics in Transformer Based Question Answering over Tables. arXiv. (2021). ↩

Related posts

- Q & APeter Hess

AI, you have a lot of explaining to do

ReleaseDinesh Garg, Parag Singla, Dinesh Khandelwal, Shourya Aggarwal, Divyanshu Mandowara, and Vishwajeet Agrawal5 minute readIBM, MIT and Harvard release “Common Sense AI” dataset at ICML 2021

ReleaseDan Gutfreund, Abhishek Bhandwaldar, and Chuang Gan6 minute readKnowledge Graph construction gets big boost from AI

ResearchAlfio Gliozzo, Michael Glass, Gaetano Rossiello, and Faisal Chowdhury11 minute read